EmailPrintTwitterFacebookRedditGoogle+

SPONSOR MESSAGE

Scrolling through a news feed regularly looks like gambling Two Truths and a Lie.

Some falsehoods are clean to identify. Like reports that First Lady Melania Trump wanted an exorcist to cleanse the White House of Obama-era demons, or that an Ohio college major becomes arrested for defecating in the front of a pupil meeting. In other instances, fiction blends a touch too nicely with reality. Was CNN actually raided by way of the Federal Communications Commission? Did cops simply find a meth lab interior an Alabama Walmart? No and no. But everybody scrolling thru a slew of tales should easily be fooled.

We live in a golden age of misinformation. On Twitter, falsehoods spread further and quicker than the truth (SN: three/31/18, p. 14). In the run-as much as the 2016 U.S. Presidential election, the maximum famous bogus articles got extra Facebook stocks, reactions, and feedback than the pinnacle actual news, according to a BuzzFeed News evaluation.

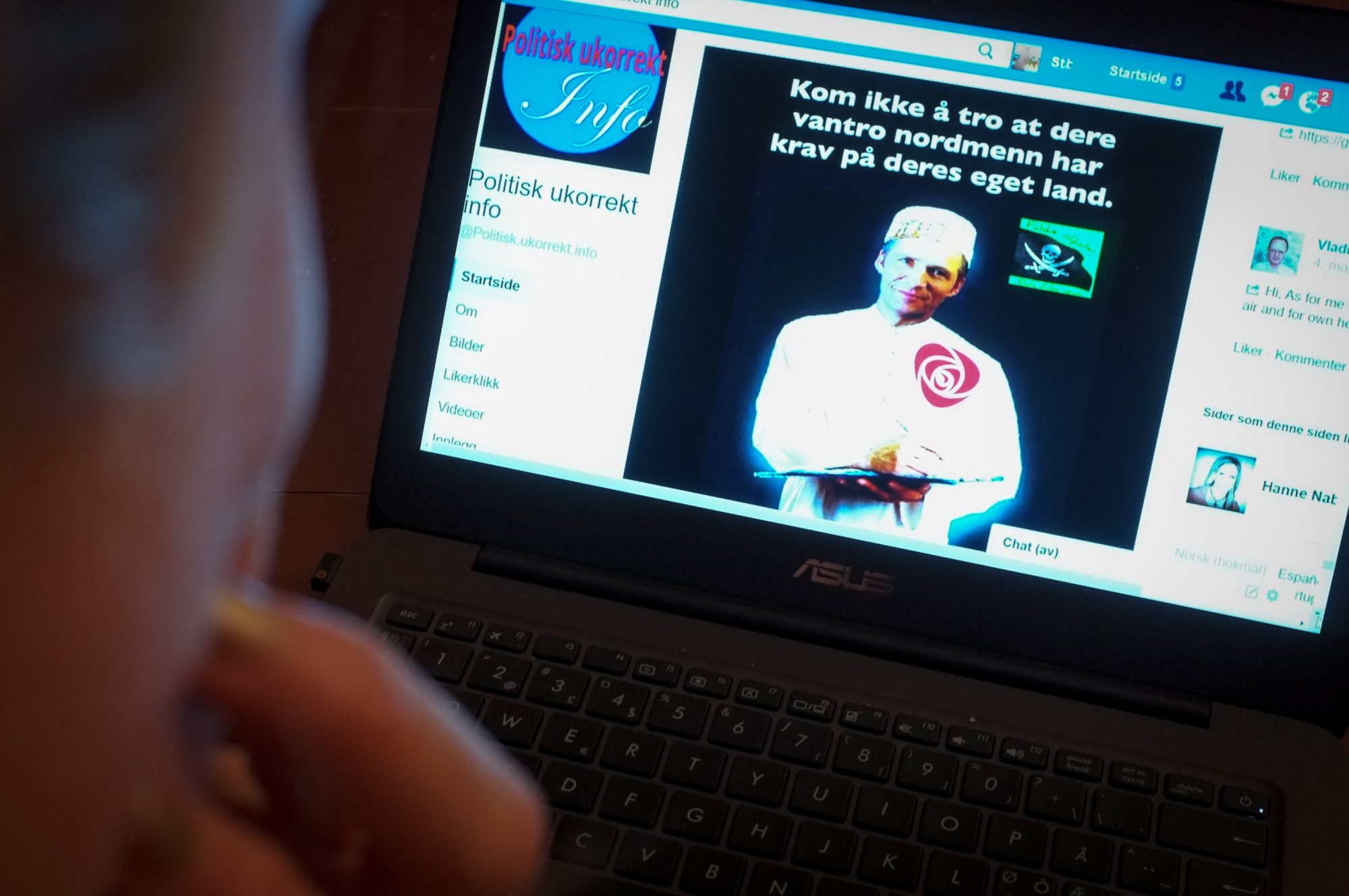

Before the net, “you could not have a person sitting in an attic and producing conspiracy theories at a mass scale,” says Luca de Alfaro, a laptop scientist at the University of California, Santa Cruz. But with today’s social media, peddling lies is all too easy — whether those lies come from clothes like Infomedia, a corporation that has owned numerous fake information websites, or a scrum of young adults in Macedonia who raked in the cash by way of writing famous fake information during the 2016 election.

Most net customers possibly aren’t intentionally broadcasting bunk. Information overload and the average Web surfer’s restricted attention span aren’t precisely conducive to reality-checking vigilance. Confirmation bias feeds in as properly. “When you’re coping with unfiltered statistics, it’s probably that human beings will pick out something that conforms to their personal questioning, even though that information is false,” says Fabiana Zollo, a computer scientist at Ca’ Foscari University of Venice in Italy who studies how records circulate on social networks.

Intentional or no longer, sharing misinformation could have serious effects. Fake information doesn’t simply threaten the integrity of elections and erodes public trust in actual information. It threatens lives. False rumors that unfold on WhatsApp, a phone messaging device, as an example, incited lynchings in India this year that left more than a dozen human beings dead.

To help kind of faux information from the truth, programmers are building automatic structures that choose the veracity of online testimonies. A laptop program would possibly remember certain traits of an editorial or the reception an editorial gets on social media. Computers that recognize sure caution symptoms should alert human fact-checkers, who could do the final verification.

Automatic lie-finding tools are “nevertheless of their infancy,” says pc scientist Giovanni Luca Ciampaglia of Indiana University Bloomington. Researchers are exploring which elements most reliably peg faux news. Unfortunately, they don’t have any agreed-upon set of authentic and fake memories to apply for checking out their methods. Some programmers rely on installed media outlets or kingdom press agencies to decide which memories are proper or not, at the same time as others draw from lists of stated fake information on social media. So studies on this vicinity are something of a free-for-all.

But groups around the sector are forging ahead due to the fact the internet is a hearth hose of statistics, and asking human truth-checkers to preserve up is like aiming that hose at a Brita filter out. “It’s sort of thoughts-numbing,” says Alex Kasprak, a technology writer at Snopes, the oldest and biggest online fact-checking site, “simply the volume of really shoddy stuff that’s available.”

Reader referrals

Visitors to real information websites particularly reach those websites directly or to seeking engine outcomes. Fake information websites appeal to a much higher share of their incoming net visitors via hyperlinks on social media.

H. THOMPSON

Substance and fashion

When it comes to analyzing news content immediately, there are essential ways to tell if a tale suits the bill for fraudulence: what the author is announcing and the way the author is pronouncing it.

Ciampaglia and colleagues computerized this tedious venture with a software that checks how closely related a statement’s concern and object are. To do that, this system uses a large network of nouns built from data observed inside the infobox on the right side of every Wikipedia web page — although comparable networks have been built from different reservoirs of information, like studies databases.

In the Ciampaglia organization’s noun network, nouns are connected if one noun regarded inside the infobox of any other. The fewer ranges of separation between an announcement’s concern and object on this community, and the greater specific the intermediate phrases connecting challenge and item, the more likely the computer application is to label a statement as authentic.

Take the fake statement “Barack Obama is a Muslim.” There are seven levels of separation among “Obama” and “Islam” in the noun network, which includes very standard nouns, consisting of “Canada,” that hook up with many other phrases. Given this long, meandering route, the automatic truth-checker, described in 2015 in PLOS ONE, deemed Obama not likely to be Muslim.

Roundabout direction

A computerized fact-checker judge the assertion “Barack Obama is a Muslim” by means of reading tiers of separation between the words “Obama” and “Islam” in a noun community constructed from Wikipedia info. The very free connection between those two nouns shows the statement is false.

C. CHANG

WIKIPEDIA

Source: G.L. Ciampaglia et al/PLOS One 2015

But estimating the veracity of statements primarily based in this type of difficulty-object separation has limits. For example, the system deemed it likely that former President George W. Bush is married to Laura Bush. Great. It also decided George W. Bush is probably married to Barbara Bush, his mother. Less excellent. Ciampaglia and co-workers had been operating to give they’re the application a greater nuanced view of the relationships among nuns in the community.

Verifying each declaration in an article isn’t the most effective way to look if a tale passes the smell test. The writing style may be some other giveaway. Benjamin Horne and Sibel Adali, computer scientists at Rensselaer Polytechnic Institute in Troy, N.Y., analyzed 75 genuine articles from media retailers deemed most sincere by means of Business Insider, in addition to seventy-five false memories from websites on a blacklist of misleading websites. Compared with real news, fake articles tended to be shorter and greater repetitive with extra adverbs. Fake testimonies additionally had fewer quotes, technical phrases, and nouns.

Based on those outcomes, the researchers created a laptop application that used the 4 strongest distinguishing elements of fake information — the number of nouns and quantity of quotes, redundancy, and word counts — to judge article veracity. The software, provided at closing year’s International Conference on Web and Social Media in Montreal, successfully taken care of faux news from true 71 percent of the time (a program that sorted faux information from real at random might show approximately 50 percent accuracy). Horne and Adali are searching out extra capabilities to reinforce accuracy.

Verónica Pérez-Rosas, a computer scientist at the University of Michigan in Ann Arbor, and associates compared 240 real and 240 made-up articles. Like Horne and Adali, Pérez-Rosas’ crew located more adverbs in faux information articles than in real ones. The fake news in this evaluation reported at arXiv.Org on August 23, 2017, additionally tended to use greater nice language and specific extra actuality.